Conceptualizing a new revenue stream with workshops and In person testing

- Industry: Retail

- Challenge: Determine if a product subscription model can increase in-store visits and purchase frequency.

- My Role: UX Design, Research, Feedback Analysis Facilitation

Overview

In an effort to increase in-store visits and purchase frequency, leadership tasked me with conceptualizing and testing a new product subscription model. While home fragrance was the desired category for the program, other opportunities would also be explored. Additionally, the program needed to take little to no development resources away from other initiatives..

Discovery

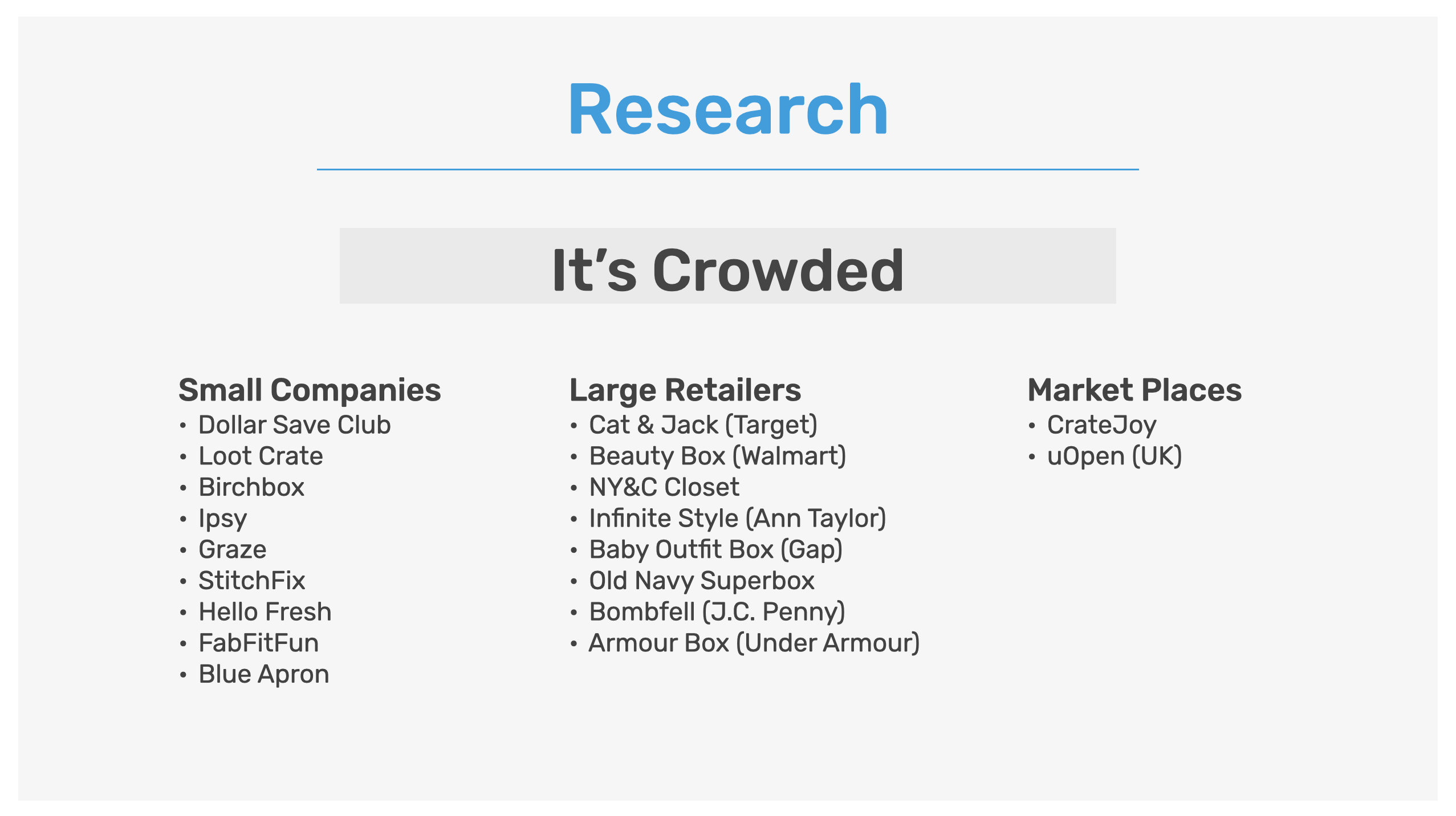

Being a new market for the company, there where a lot of unknowns. Which was going to require an extensive research phase to fully understand this space. To me the a logical first step was to start by looking at existing product subscriptions. I was mainly looking for patterns and established expectations that must be done right in order for our offering to be successful. The theory being that failing to meet even the most basic customer expectations could derail the program before it really has a chance to succeed.

At the time of the project, it was clear that while relatively new, it was crowded. Mainly made up of small startup companies with larger retail brands also starting to get in on the action. Additionally there was a third tier made up of market places, specifically designed to give individuals the ability to setup their own product subscription businesses. Given those findings, it became even more clear that we had some established norms we could not violate. Those market norms where also experienced first hand as we ordered and signed up for a few of the top fragrance subscription boxes ourselves. Of the ones we tested, the signup process was easy, and packaging usually nicely done. Adding to our list of market norms.

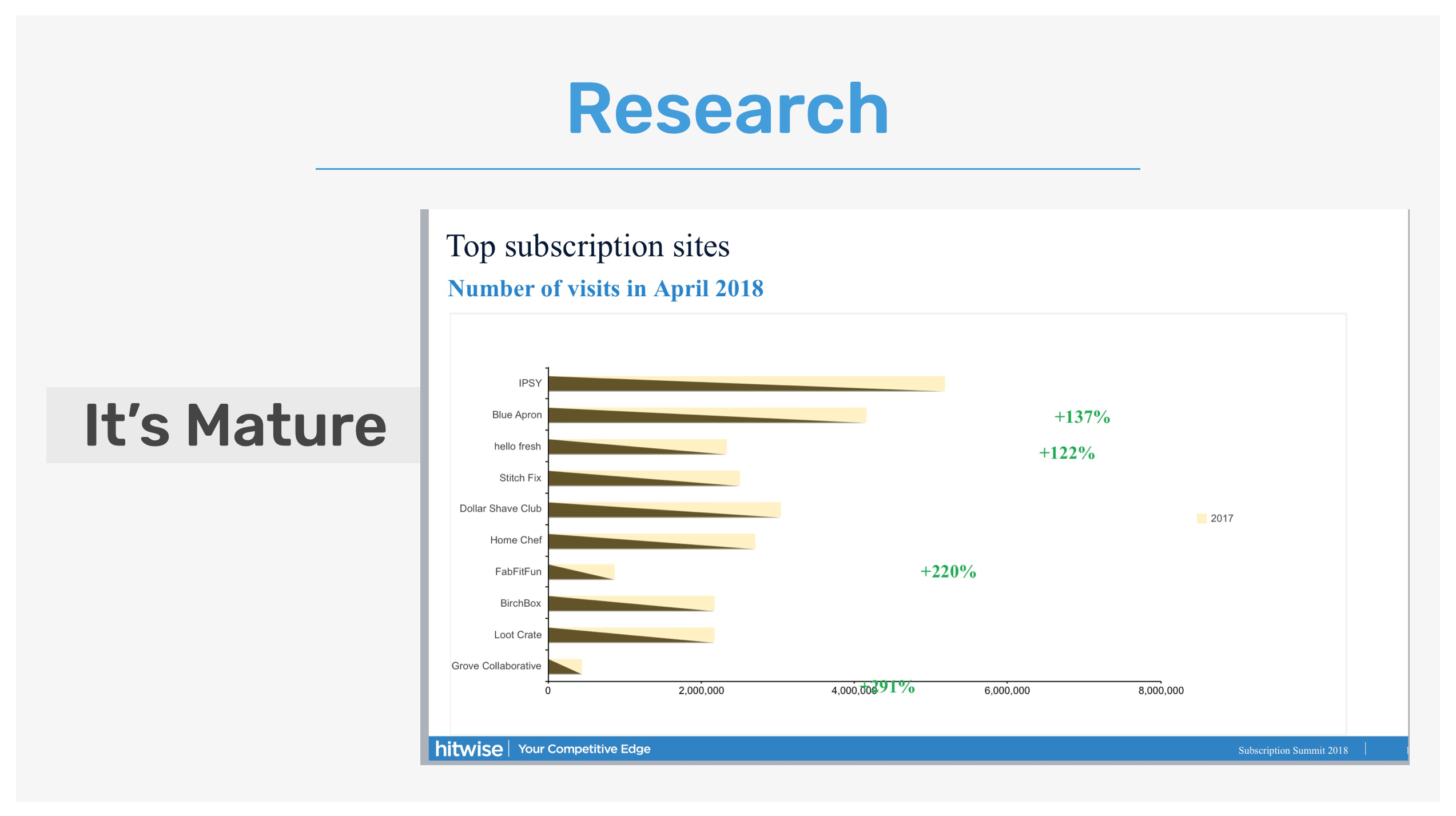

Our seconded major learning from the research was that it's mature or at least maturing. While U.S. online visits to subscription box sites had risen nearly tenfold the past four years (again, at the time of research), to 41.7 million visits in April, traffic had slowed from the “exponential rates” in 2014 and early 2015, according to a study from online-traffic tracker Hitwise. Which was comprised of a panel of 8.1 million U.S. online consumers. In addition to those numbers showing signs of maturity, it also reflected a saturated market with a large number of companies, big and small, competing for their share.

However, it still was an attractive market given the amount of attention and growth potential. 18.5 million people visited at least one subscription box site in Q1 (Hitwise Data) and 15% of online shoppers signed up for one or more subscriptions on a recurring basis (McKinsey Study).

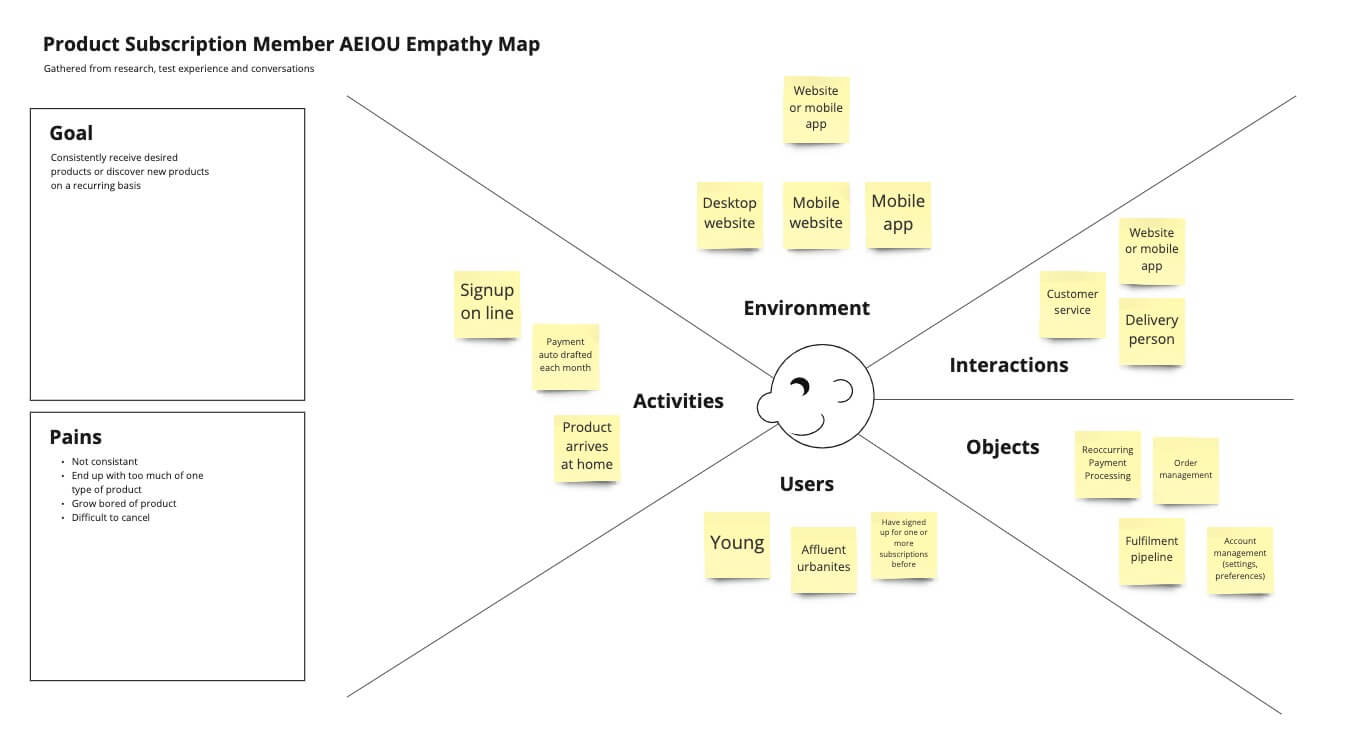

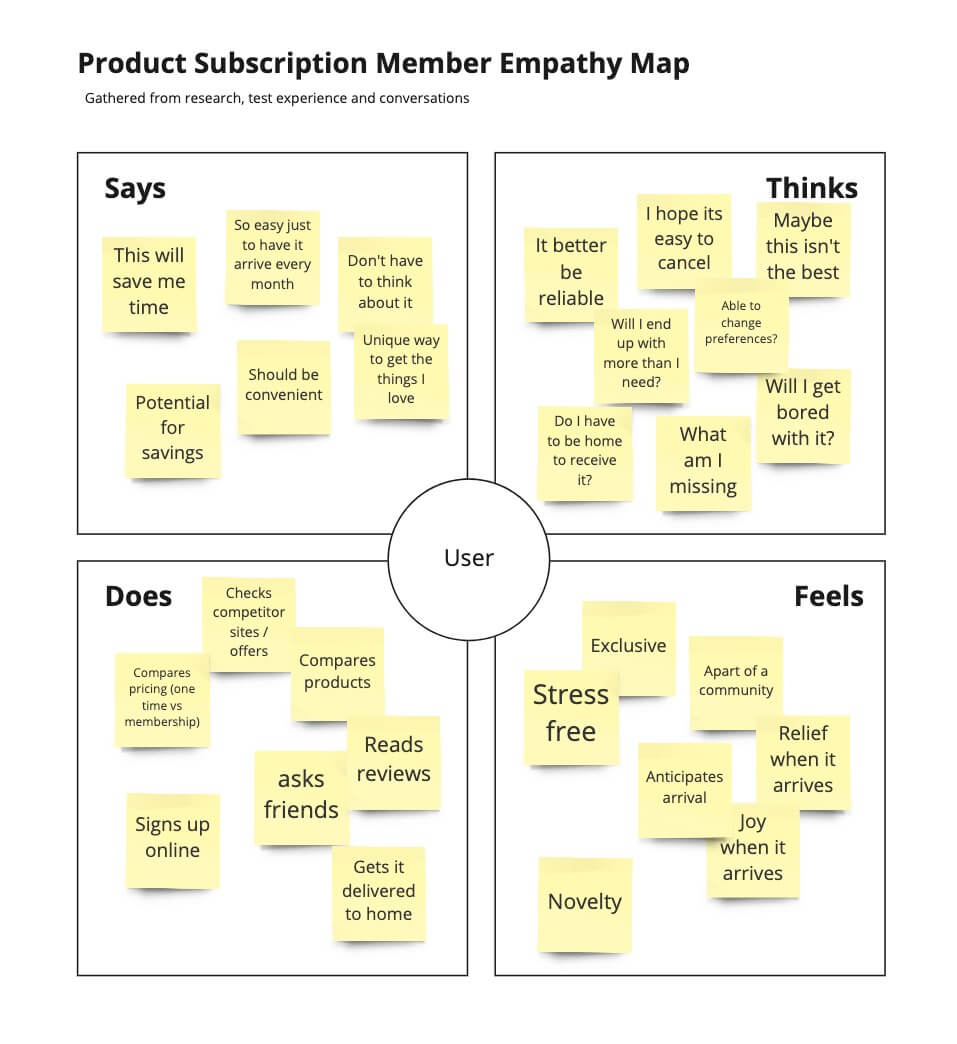

In addition to understanding the industry, it was important to understand its customers. Specifically around what makes a product subscription a success or not: why she'd sign up & why she'd cancel, etc. Here I put together some empathy mapping boards in Miro (AEIOU and traditional), capturing data points from research, test experiences and conversations.

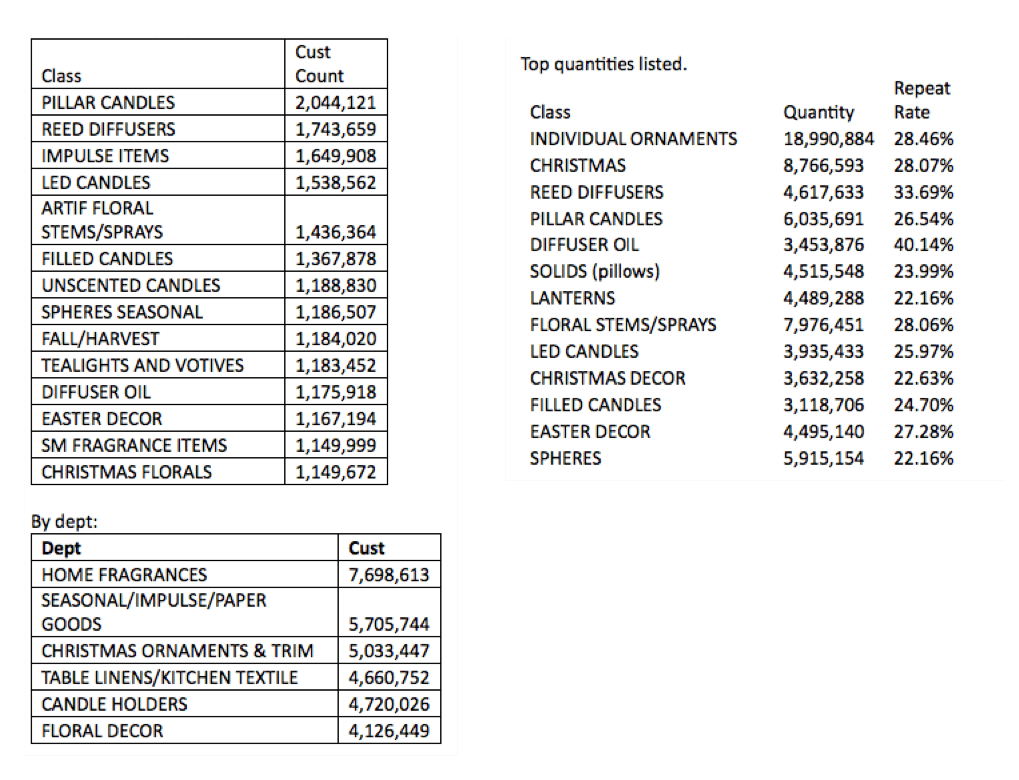

Now, with a greater understanding of the market and the expectations of its customers, do we even have customers that fit the product subscription persona? Given this was a new business model for the company, naturally there would be no existing data to review. I would instead need to look through current purchase data, looking for patterns that would suggest a fit and/or opportunity. For that, I turned to our data science team to help pull together some insights.

Overall what we learned was that demand for the target product(s) in the fragrance category was high with a decent repeat purchase rate. We also found in data not shown here (proprietary in nature) those customers trended towards being our most loyal with a recent boost in new customer acquisition from a candle BOGO campaign. The potential was at lest intriguing, but what would that experience look like? Could we even operate a successful market offering?

Prototype / Conceptualize

Given what we learned in the research phase, it was decided we would proceed with the conceptualization of two approaches. A more traditional "Ship First" approach and a unique "Store First" approach.

To understand all the different resources needed and define a testable experience, each approach was put through a decision/brainstorming workshop (LDJ). Each workshop was designed to uncover all the benefits, problems, and solutions with the take-a-ways being actionable tasks identified based on their level of effort and impact. Which would then be used to define what our experience would look like.

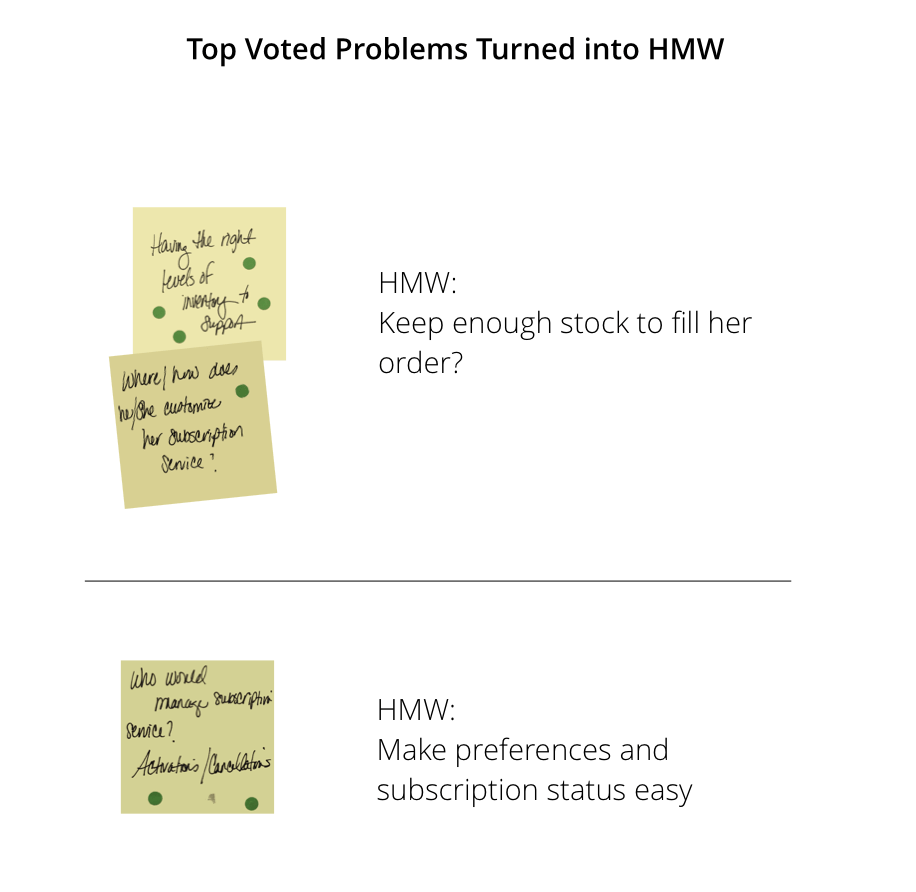

Starting with the positive/benefits and then moving into any issues/problems we may encounter, everyone individually wrote their thoughts down on post-its and placed them on a whiteboard. The problem statements where then prioritized by using a dot vote exercise designed to allow the group to surface which ones they felt most strongly about.

The problems that received the most votes where then rewritten in the form of a standardized challenge using the "How Might We" (HMW) format making it more testable.

Now with our HMW statements created, our next step was to ideate on potential solutions. This was again done without discussion and followed the same order as getting to the HMW. Everyone captured their solutions on post-its, placed them on the whiteboard, and prioritized with dot voting.

From here the focus turned to identifying which top solution was the most important to solve. To do this, we needed to determine which solutions were simple enough to try right way and which should be added to a project backlog. This was done through an effort/impact exercise with the solutions landing in the top left square becoming our first priority.

Lastly, we took those solutions in the "sweet-spot" and made them actionable by giving each at least 3 steps/tasks that would lead us to a testable experience. Which for this project was then presented to leadership to decide which approach we would pursue and test.

User Testing

After presenting all of our findings, due the attachment opportunity, limited resources and logistical challenges uncovered through discovery, leadership decided to explore the store first approach. Using an experience crafted from our workshops, it was time to organize a way to test the concept.

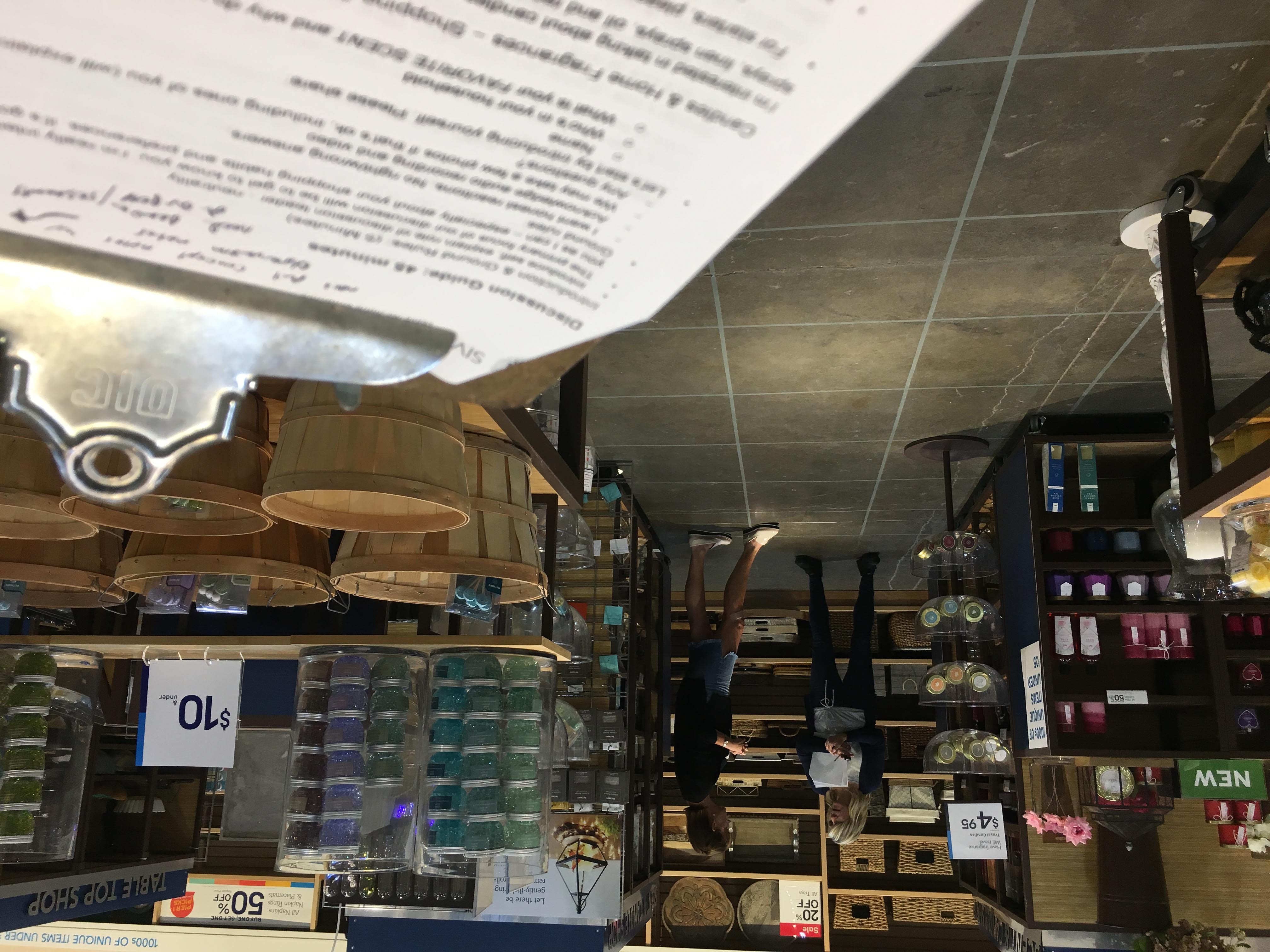

Fortunately, one of our agency partners was already scheduled to be in town for an unrelated focus group. Which provided the perfect opportunity to also utilize them for customer recruitment and interview facilitation. So while they recruited against our recruiting criteria, I worked on developing the testing structure and logistics.

Because this was an store first approach we decided to utilize our mock store. Which gave us a unique opportunity to not only talk to our target user about the new membership offering, but to also have them actually walk through their experience of fulfilling it. We setup a comfortable interview area, separated from the mock store area with quick standup barriers. Which also served as a nice way to simulate walking into the front door of the store for the shop-along portion.

The barriers also helped create a makeshift command center for debriefings, scheduling alternative participates for any no shows, GoPro charging station and most importantly, tea breaks.

The first portion of our concept test occurred in our interview area. Here we first introduced the participates to the team along with an overview of what the primary focus of our discussion with them would be. From there our interviewer would work through the test structure starting with shopping and usage. Which was a conversation focused on gathering each individual's shopping habits, reasons for a purchase and preferences. Next, we introduced her to the concept, gathering their initial reactions followed by a probing discussion of what would make it better or worse.

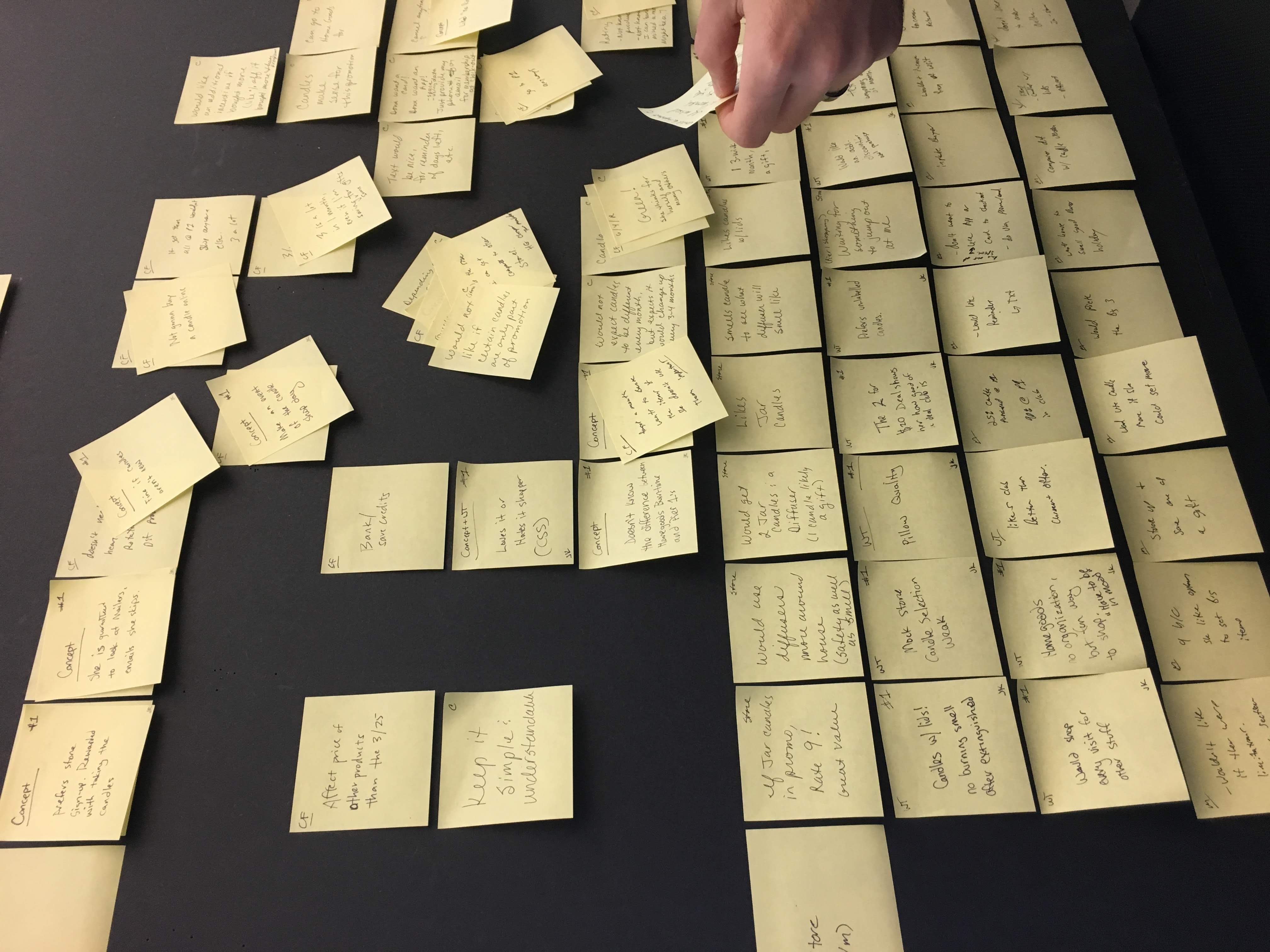

During the interview, my team and took on the role as observers, jotting down any interesting statements on post-its. Which where done so in a predefined structure in an effort to keep them consistent and easier to review during the analysis phase.

After the concept discussion, we invited our participates to physically walk through the experience of fulfilling that month's membership offer. This was done by having her enter the mock store through our makeshift entrance, and walk us through how she would navigate through this hypothetical shopping trip. Here we gathered insights through observations (what direction did she go first, what items did she look at along the way, did she find the fragrance section, etc.) with interview prompts and questions (mainly around what is she thinking as she shops, impressions, reasons for item selection, etc).

Again, my team and I stayed back and followed along with our preplanned script, documenting observations on our growing mountain of post-its.

After each session we'd debrief, sharing favorite observations while I collected everyone's post-its in a ziplock bag for analysis back at the home office.

Analysis

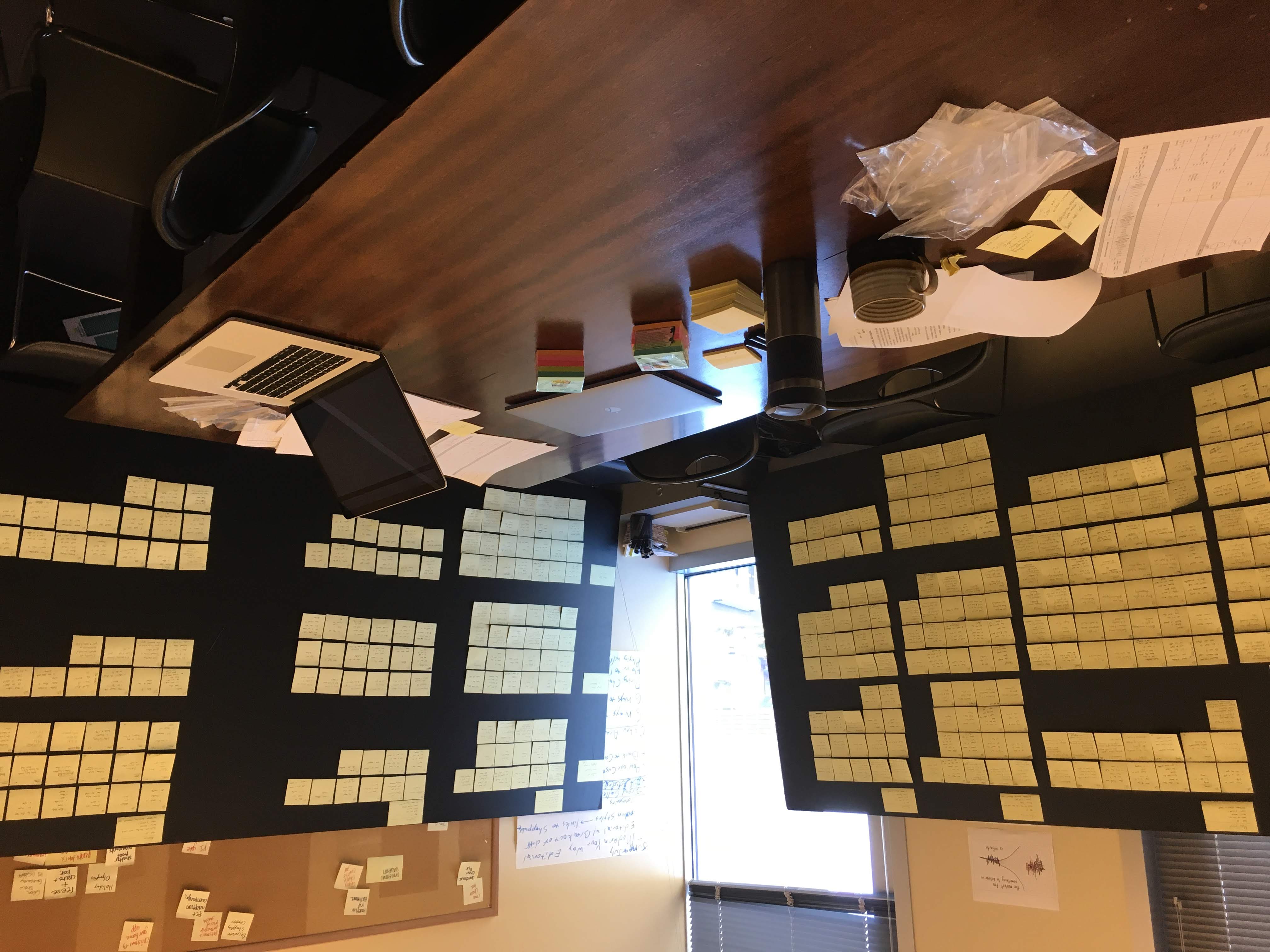

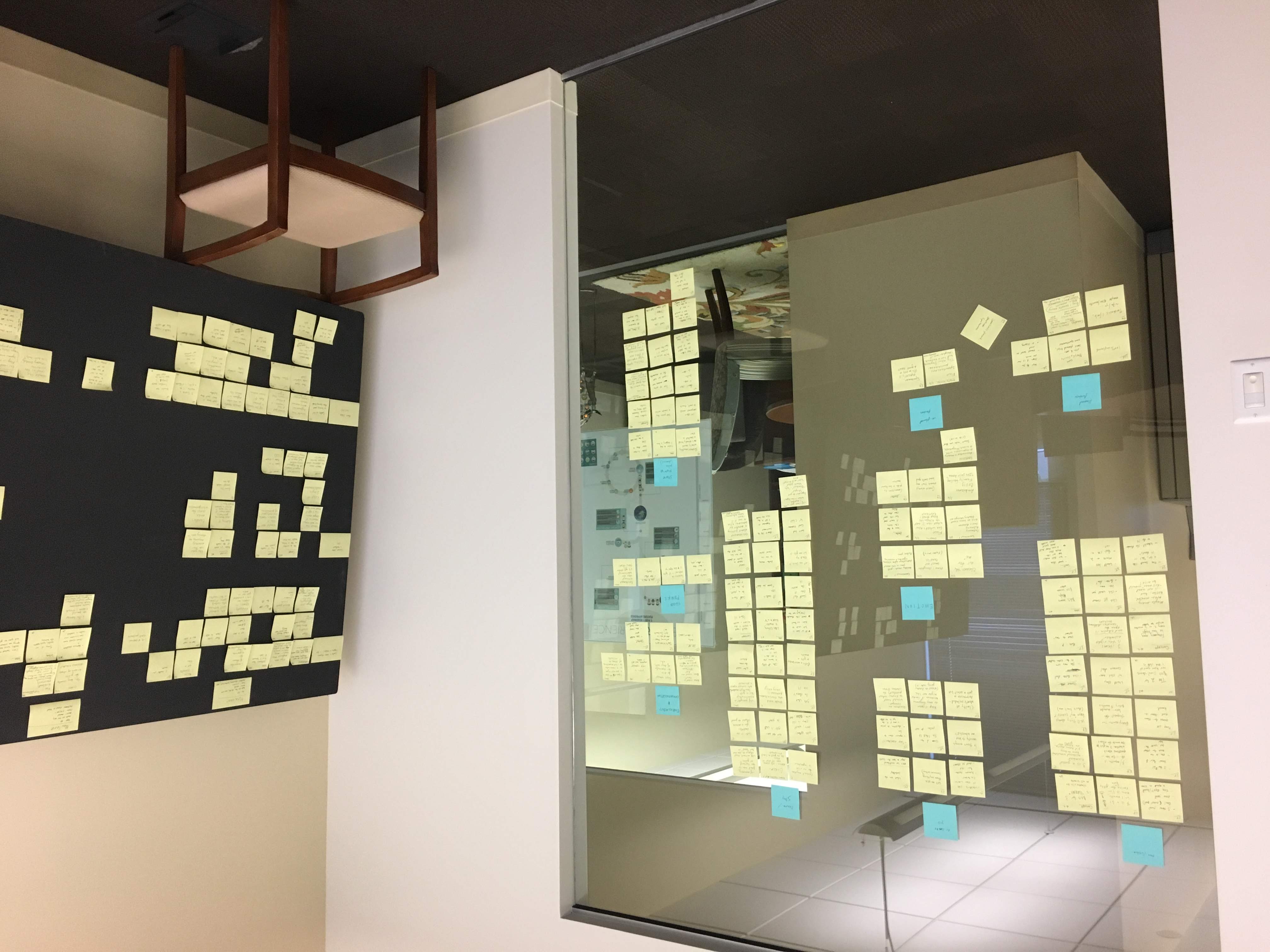

Once the all interviews where completed it was now time to regroup back at the home office, analyze and look for patterns. For this part of the research, I took over one of our conference rooms, lined it with foam core boards and began to organize all of our notes. Here I placed on the left hand side labels for our different sections of the test structure (Intro, Shopping and Usage, Concept Introduction and Shop-a-long). Then across the top a label for each participate. From there it was mostly fill in the blanks. Since our note taking during the sessions followed a structure, this task was easy to do. Each note contained a very short, one line statement, with initials of what section of the test that note was captured in. Bagging up everyone's stack after each interview, kept them all grouped by that participate.

As I placed the notes on the boards, the team and I stacked similar statements to be de-duped. Often resulting in a quick conversation around which statement most accurately and clearly captured the observation. All resulting in a massive collection of beautifully organized user feedback.

Individually, our participates shared a wealth of information about their shopping habits and likelihood of participating in our membership offering. Now it was time to determine if they collectively told us if their participation in the membership program would be profitable for the company.

To do this I used an affinity mapping exercise with the team were we'd take some time to study the organized notes, look for similarities and then grouped those under emerging themes.

Conclusion

The themes uncovered during our affinity mapping, and session playbacks painted a clear picture of opportunities for a positive and unique in-store experience but also plagued with hurdles around cost and longevity. Unfortunately, based on key ROI metrics uncovered during our research, the project's viability hinged on repeat visits and attachment to be a profitable offering. Given that the hurdles pointed to this potentially not being the case, even with our most loyal fans of these products, it was decided that the risk was too great to proceed with a membership program in this form.

While the end result was not what the team or leadership was hopping for, the process of discovery, conceptualizing and testing proved to be a valuable exercise, potentially saving the company millions in cost and loss revenue from a failed venture.

About Me

I‘m a Fort Worth based Product/UX Designer with ten years experience conceptualizing and crafting digital products.

Through that time, no matter the project, I’ve found that there are two constants that determine a successful project; collaboration and user input. If not directly, then indirectly through a multitude of project “hacks” that can achieve the same results. As long as all involved are iterating towards the same goal, and said goal is beneficial for the person actually using it, your project will be amazing.

Currently, I’m on the e-commerce team at Pier 1 working to strategically solve business objectives while also creating ideal experiences for our customers.

Previously, I was at First Command Financial Planning firm and a freelance contractor before that. At both stops I served in a designer role, facilitating concepts, prototyping, and crafting beautifully functional UIs with a little bit of html/css work thrown in good measure.